Why only ask the speaker when you can use AI to ask the data too?

Or, why vibe coding in seminars and meetings might help accelerate science and progress.

Why only ask the speaker when you can use AI to ask the data too? Or, why vibe coding in seminars and meetings might help accelerate science and progress.

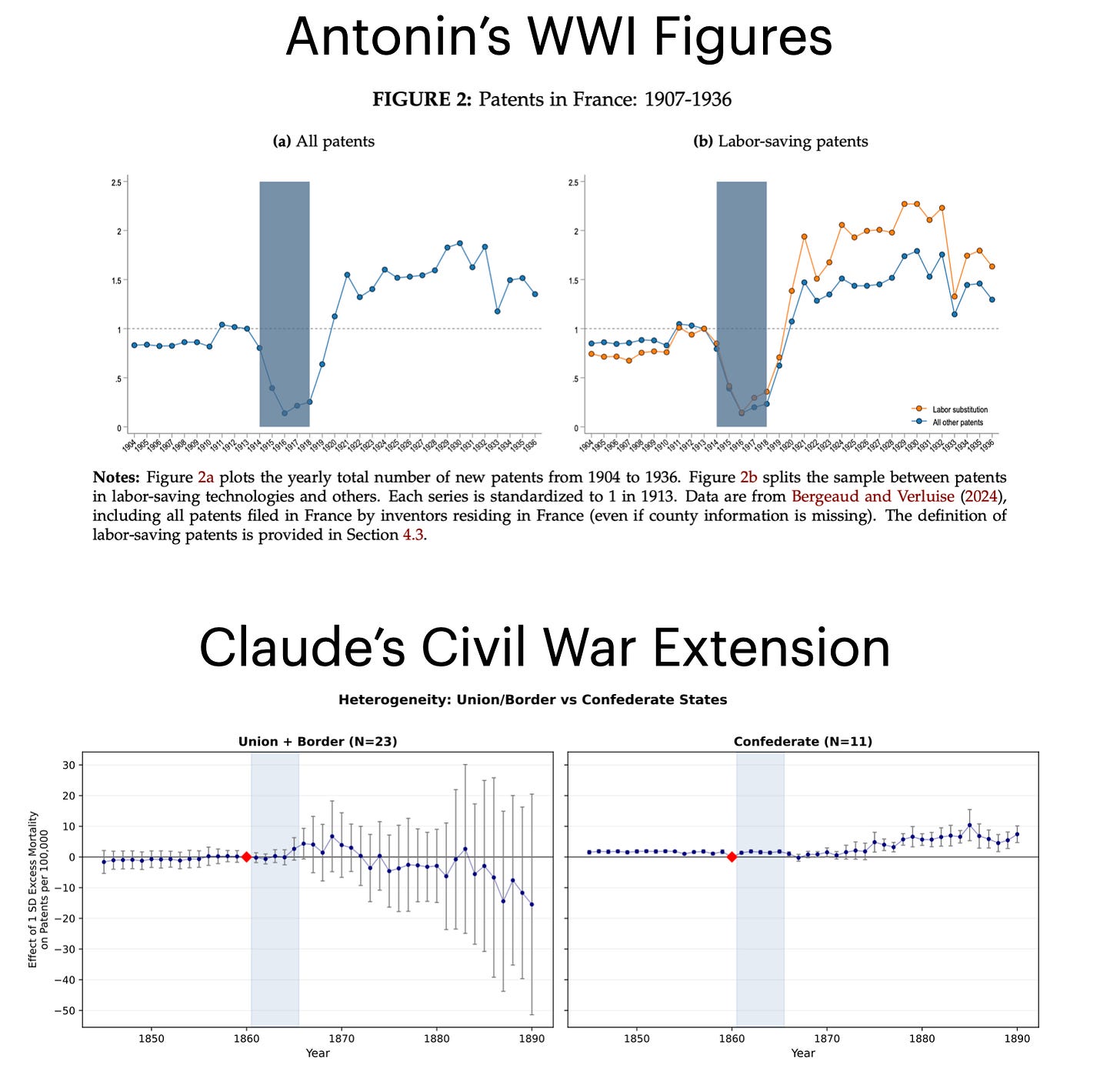

In March, Antonin Bergeaud gave a fun talk at HBS exploring how WWI impacted innovation in France. I asked him how his findings might generalize to other countries and wars. He had a solid answer, but academics always want to know more and always have another question to ask. I decided to fire up Claude Code and see if it could make some progress on this question using real data within the 50 minutes we had left in the seminar.

I dumped his paper into a folder and asked Claude Code to apply his approach to the US Civil War. I was sure public patent data in the US goes back that far, and I had a sense that mortality data for the Civil War had to be available somewhere online. My first prompts were simple: find Civil War mortality, ideally at the county level but state level would do for now, and find the historical patent data covering the 19th century. With a little back and forth, it returned Barceló et al. (2024) for mortality, HistPat for patents, and a path for pulling additional census data. I kept pushing for county-level analysis, but the mortality data only resolved cleanly at the state level, so I settled for a less granular analysis. About 43 minutes of Claude work plus my own thinking over roughly a dozen prompts while Antonin presented... and the attached figure, in Antonin’s style, was produced. Code and data here: https://github.com/remkoning/escaping-labor-scarcity-us

It’s far, far, far from a paper. But it’s an extension, and a promising one. Claude even pointed out how I might move from a state to county analysis, which would make this much more credible, but it would require downloading 100GB of historical data and signing up for an API key, both of which would have taken longer than the 50 minutes I had. But the data is there, sources documented, code generated, all there. One could start building extensions, comparisons, and more.

It also opens up questions about how we should think about doing science going forward.

For example, why do we ask questions in seminars instead of just doing the analyses ourselves? One way to view this is that the cost of getting up to speed on someone else’s project is simply way too high. One researcher bears the fixed cost, and everyone else asks them questions. The authors (or their RAs!) become the bottleneck.

What’s crazy is I was able to take Antonin’s paper and extend it without any of the authors lifting a finger. Imagine if in seminars the author shared their data with us through a GitHub repo (or secure shared workspace if needed). And then everyone just hacks on it for 90 minutes, using AI to ask questions, try new analyses, run robustness, and then collectively everyone’s work is shared back with the authors, making the paper better. In a seminar with 20 awesome researchers, the paper suddenly got at least 20 hours better! The speaker could answer questions, help clarify any confusion, and serve as “tech support” for everyone in the room. Instead of having to wait to ask questions and then wait till your RAs implement a seminar comment, it just happens right there, in the room, in parallel. Suddenly the seminar isn’t such a bottleneck, and science can move faster and papers (hopefully) improve!

There are echoes here of a recent paper showing how AI has led to more, but not better, submissions and reviews at Organization Science. On the one hand, I could try and submit my extension to a journal and frontrun the authors, which would both be a terrible thing to do and just lead to more slop (again, my analysis is far from a paper... it’s at best the spark of an idea). Or we can try and reimagine how we do our science to benefit from AI. If we can work out how to map AI into scientific production, akin to how AI-Native startups are reorganizing their firms and talent around AI, I think the future of (social) science is incredibly bright. If we instead just dump AI into our existing institutions and organizations, we are going to see an incredible amount of slop.

PS: I think this model would work well in firms too! Instead of meetings where someone speaks the entire time, why not have them share their analysis, research, etc... and then everyone vibe codes new analysis, extensions, questions, etc... Obviously, sometimes you want everyone just listening to the same thing, but AI has lowered the cost of “jumping into” a new analysis in ways that make this sort of parallel building exciting and possible.